Bridging logic, human performance, and operational resilience

Introduction: The Persistent Challenge of Human Error

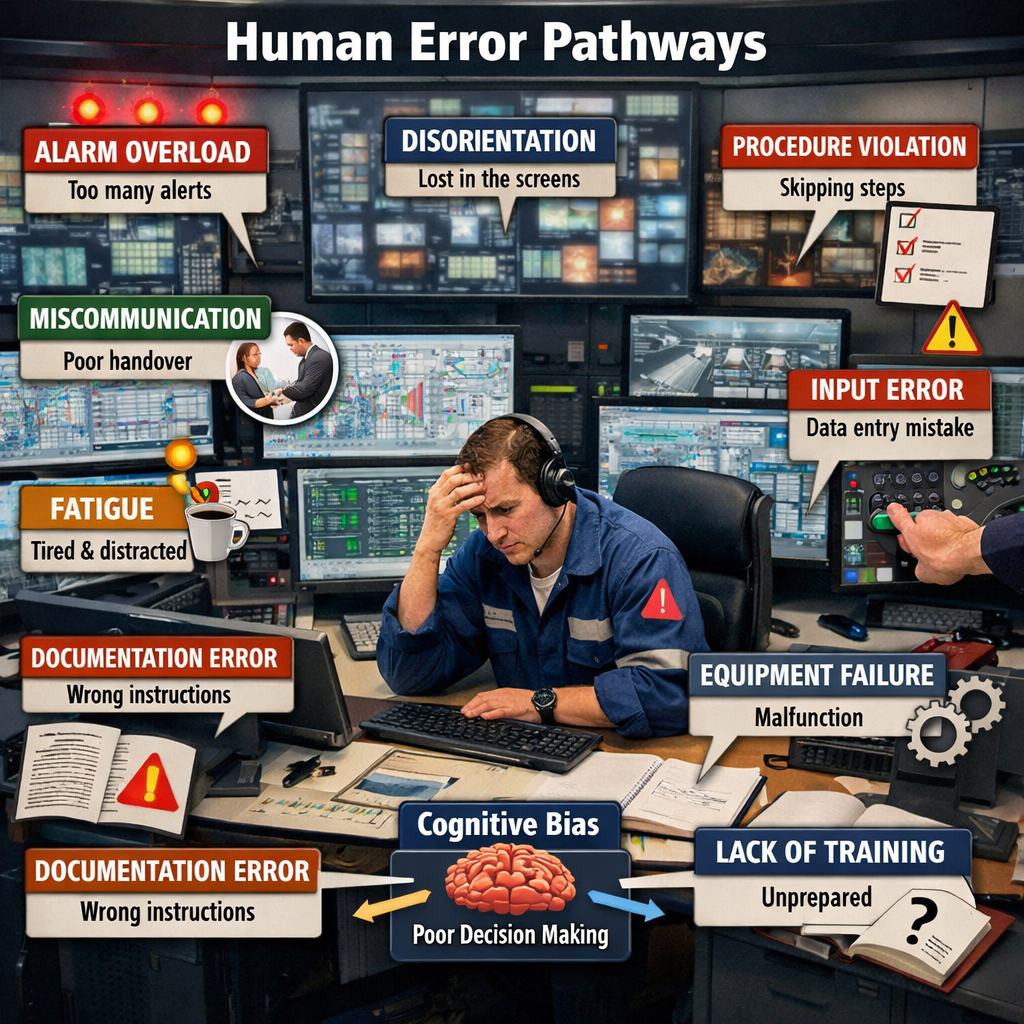

Despite decades of advancement in engineering controls, automation, and safety management systems, human error remains a dominant contributor to serious incidents in industrial environments. In high-hazard sectors—chemicals, metals, energy, and advanced manufacturing—the issue is not simply that people make mistakes. It is that:

- Systems are often designed around work-as-imagined, not work-as-done

- Weak signals of failure are present—but not recognized in time

- Decision-making under pressure introduces variability that systems fail to anticipate

Traditional approaches—training, procedures, and supervision—have plateaued in effectiveness. What is emerging now is a new capability:

The application of automated reasoning to detect, interpret, and respond to human error potential in real time.

The Opportunity for Industrial Organizations

Industrial operations are entering a period of increasing complexity—driven by advanced technologies, workforce transitions, and rising expectations for safety and performance.

At the same time, organizations are facing a growing challenge:

the loss of deeply experienced professionals who have historically served as the primary line of defense against human error.

What is being lost is not just knowledge—but the ability to recognize when conditions are aligning for failure.

The strategic question for leaders is this: How do we preserve and scale that judgment across the organization—consistently, in real time, and at global scale?

Automated reasoning represents a critical step in that evolution, enabling organizations to move from reacting to errors to understanding and managing the conditions that create them.

What Is Automated Reasoning in This Context?

Automated reasoning is the use of formal logic, structured knowledge, and inference engines to derive conclusions from known conditions.

In the context of human error detection, it moves beyond simple monitoring to answer:

- Given the conditions, what errors are likely?

- Are the safeguards sufficient for this specific situation?

- What is the most probable failure pathway right now?

This is fundamentally different from traditional analytics.

| Traditional Systems | Automated Reasoning Systems |

|---|---|

| Detect anomalies | Explain why they matter |

| Monitor conditions | Interpret risk implications |

| Trigger alarms | Evaluate decision quality and context |

While automated reasoning is often grouped under the broader umbrella of artificial intelligence, it represents a fundamentally different capability. Most AI—particularly machine learning—focuses on identifying patterns and making predictions based on data. Automated reasoning, by contrast, applies explicit logic and structured rules to determine cause-and-effect relationships and draw explainable conclusions. In practical terms, AI can tell you something is changing or likely to happen, while automated reasoning explains why it matters, how it could lead to failure, and what should be done about it. This distinction is critical in industrial settings, where transparency, consistency, and defensible decision-making are essential.

The Core Shift: From Data to Logic-Based Insight

Most industrial AI deployments today rely heavily on pattern recognition—identifying deviations in process variables, behaviors, or outcomes.

Automated reasoning introduces a critical layer:

It applies structured logic to determine whether current conditions create a credible pathway to human error.

This includes reasoning across:

- Task complexity

- Environmental conditions

- Time pressure

- Procedure alignment vs. actual execution

- Worker capability and experience

- System fragility and safeguards

Capturing Context: The Critical Enabler of Effective Automated Reasoning

One of the most important—and often misunderstood—aspects of automated reasoning is how context is captured, structured, and interpreted.

Without context, even the most advanced reasoning engine becomes little more than a rules engine applying generic logic. With context, it becomes something far more powerful:

A system that understands not just what is happening—but what it means under the current conditions.

Why Context Matters in Human Error Detection

Human error does not occur in isolation. It emerges from the interaction between:

- The task being performed

- The conditions under which it is performed

- The capabilities and state of the individual

- The design and resilience of the system

The same task can be:

- Low risk in one context

- High risk in another

Example:

Opening a valve:

- Routine, low risk during normal operations

- High risk during startup, under time pressure, with similar valve configurations

👉 The difference is not the task—it is the context

How Automated Reasoning Captures Context

Automated reasoning systems structure context into four primary dimensions, each representing a different aspect of how work is actually performed.

1. Task Context (What is being done)

This dimension defines the inherent characteristics of the work itself and how prone it is to error under normal conditions.

- Routine vs. non-routine work

- Task complexity and step count

- Precision required

- Known failure modes

👉 How inherently error-prone is this task?

2. Operational Context (Under what conditions)

This dimension captures the external pressures and environmental conditions that influence how the task is executed.

- Time pressure and production demand

- Shift timing (night vs. day)

- Environmental factors (heat, noise, visibility)

- Concurrent work and distractions

👉 What external pressures are influencing performance?

3. Human Context (Who is performing the work)

This dimension focuses on the capabilities and current state of the individual or team performing the task.

- Experience and familiarity

- Fatigue and cognitive load

- Interruptions and task switching

- Supervision and crew dynamics

👉 How capable is the system—through people—right now?

4. System Context (How well the system supports the work)

This dimension evaluates how effectively the system design, procedures, and safeguards support successful execution.

- Procedure quality and usability

- Equipment design (e.g., similarity, ergonomics)

- Safeguards and interlocks

- Prior incidents or near misses

👉 Is the system enabling success—or inviting failure?

From Context to Insight: The Reasoning Layer

Once context is captured, automated reasoning evaluates how these dimensions interact, rather than treating them as isolated inputs.

Example interaction:

- Moderate complexity

- Moderate fatigue

- Moderate time pressure

Individually → acceptable

Combined → elevated risk of error

Context is not additive—it is interactive

This mirrors what experienced EHS leaders do intuitively—recognizing when normal conditions combine into abnormal risk.

Context as a Dynamic Input (Not a Static Snapshot)

Context in industrial operations is not fixed; it evolves continuously as work progresses and conditions change.

- A task interruption occurs

- A supervisor leaves the area

- Process conditions drift

- Time pressure increases

The system recalculates in real time:

“Given the updated context, what is the new error likelihood?”

Practical Sources of Context Data

In practice, context is assembled from multiple digital and operational systems across the enterprise.

- Digital permit-to-work systems

- DCS / SCADA process data

- EHS platforms and incident history

- Training and workforce systems

- Wearables and environmental sensors

- Production schedules and constraints

The value is not the data alone—but how it is structured for reasoning

The Limitation: Context vs. Human Judgment

Even with advanced data capture, automated reasoning cannot fully replicate the richness of human situational awareness and experience.

- Subtle hesitation or uncertainty

- Team dynamics and trust

- Intuition developed through experience

- The sense that “something isn’t right”

These remain distinctly human capabilities.

Strategic Implication

The effectiveness of automated reasoning is directly dependent on how accurately and completely context is captured and represented.

The effectiveness of automated reasoning is directly tied to the quality of context it receives.

This shifts the leadership question from:

- “How good is the algorithm?”

to:

- “How well do we capture work as it is actually performed?”

How Automated Reasoning Detects Human Error Potential

1. Contextual Rule Evaluation (Beyond Static Procedures)

Automated reasoning evaluates whether procedures and expectations align with actual working conditions in real time.

- Procedures fit current conditions

- Workers are likely to deviate

- System design forces adaptation

2. Weak Signal Amplification

The system connects small, seemingly insignificant signals into a coherent picture of emerging risk.

- Minor deviations

- Workarounds

- Near misses

3. Error-Likely Situation Modeling

Human performance frameworks are applied to determine which types of errors are most likely under current conditions.

- Skill-based errors

- Rule-based errors

- Knowledge-based errors

4. Dynamic Safeguard Evaluation

Safeguards are continuously evaluated for their presence, effectiveness, and likelihood of proper use.

- Availability

- Functionality

- Likelihood of correct use

5. Decision Quality Assessment

The system evaluates the conditions under which decisions are being made to identify degradation in decision quality.

- Cognitive overload

- Bias patterns

- Goal conflict

- Degraded situational awareness

Integration with Industrial Systems

Automated reasoning does not function in isolation; it is integrated into the broader digital and operational ecosystem.

- Process control systems (DCS/SCADA)

- EHS management platforms

- Permit-to-work systems

- Wearables and monitoring tools

- Incident and near-miss databases

This enables reasoning across both:

- Technical system state

- Human system state

A Practical Example: Chemical Reactor Operation

In a typical industrial scenario, automated reasoning evaluates multiple contextual inputs to identify emerging human error risk.

Inputs:

- Inexperienced operator

- Non-routine process conditions

- Prior deviations

- High time pressure

Output:

- Elevated knowledge-based error risk

- Likely parameter misinterpretation

- Insufficient safeguards

Recommended actions:

- Add second operator verification

- Reduce ramp rate

- Increase supervision

Benefits for Industrial Organizations

Proactive Risk Identification

Automated reasoning enables organizations to anticipate failure before it occurs by identifying error-likely conditions in advance.

- Shift from “What went wrong?”

- To “What is likely to go wrong next?”

Consistency Across Operations

It standardizes decision-making logic while still adapting to local context and conditions.

- Standardized logic

- Contextual flexibility

Scalable Expertise

The knowledge and judgment of experienced professionals can be embedded and applied consistently across the organization.

- Captures expert reasoning

- Distributes it across sites

Stronger Governance

Automated reasoning provides transparency and defensibility in risk-based decision-making.

- Clear logic pathways

- Audit-ready decisions

Limitations

Automated reasoning enhances decision-making but does not replace the uniquely human aspects of safety leadership and performance. It can identify error-likely conditions, but it cannot fully influence outcomes in real-world operations.

Trust-Building

Trust is the foundation of safety performance, built through credibility and relationships over time.

- Enables open reporting of risks and weak signals

- Drives whether people act on guidance

Automated reasoning can recommend actions, but it cannot build trust or credibility.

Without trust, even the right answer may not be followed

Real-Time Influence

Safety often depends on influencing decisions in the moment under pressure.

- Challenging shortcuts

- Reinforcing the right priorities

Automated reasoning can identify risk, but it cannot persuade, adapt messaging, or manage resistance.

Being right is not enough—impact requires influence

Deep Intuition

Experience creates an intuitive sense of risk beyond formal data.

- Recognizing subtle inconsistencies

- Acting on incomplete signals

Automated reasoning lacks this depth of experiential judgment and the ability to act confidently in ambiguity.

Intuition bridges the gap between data and reality

Cultural Awareness

Organizational culture shapes how work is actually performed.

- Informal norms vs. formal procedures

- Willingness to speak up or deviate

Automated reasoning struggles to fully capture these dynamics.

Culture determines how work really gets done

Closing Insight on Limitations

Automated reasoning can identify when conditions are right for failure.

But only people can:

- Build trust

- Influence decisions

- Interpret nuance

- Shape culture

That is where safety ultimately succeeds—or fails.

The Future: Human + Reasoning Systems

The most effective safety systems will not rely on a single form of intelligence. Instead, they will combine complementary capabilities into a cohesive system that enhances both insight and action.

At its core, this model integrates three distinct but interdependent strengths:

Machine Learning → Pattern Detection

Machine learning excels at identifying patterns across large, complex datasets that are not visible through traditional analysis.

- Detects anomalies, trends, and weak signals

- Identifies correlations across operations, time, and conditions

- Continuously improves as more data becomes available

Its strength lies in answering:

“What is changing, and where should we pay attention?”

Automated Reasoning → Logical Interpretation

Automated reasoning translates data and conditions into structured, explainable conclusions about risk.

- Evaluates cause-and-effect relationships

- Assesses whether conditions create credible failure pathways

- Provides transparent, auditable logic for decisions

Its role is to answer:

“Given these conditions, what does it mean—and what is likely to happen next?”

Human Expertise → Contextual Judgment

Human expertise brings the ability to interpret nuance, adapt to ambiguity, and influence outcomes in real time.

- Applies experience, intuition, and situational awareness

- Adjusts decisions based on context not fully captured in data

- Builds trust and drives action across the organization

This is the layer that answers:

“What should we do—and how do we ensure it actually happens?”

How These Capabilities Work Together

Individually, each capability is powerful but incomplete. Together, they create a system that is both analytically strong and operationally effective.

- Machine learning identifies emerging signals

- Automated reasoning explains risk pathways and implications

- Human expertise ensures the right decisions are made and executed

Insight without interpretation creates noise.

Interpretation without action creates delay.

Action without insight creates risk.

The integration of all three closes that gap.

Strategic Implication

This combined model represents a shift from fragmented tools to integrated decision systems.

Organizations that embrace this approach will:

- Detect risk earlier

- Understand it more clearly

- Act on it more effectively

Closing Insight on Human + Machine Synergies

The future of safety is not human or machine—it is human with machine.

When pattern recognition, logical reasoning, and human judgment operate together, safety performance moves from reactive to truly predictive and resilient.

Final Perspective

Automated reasoning represents a fundamental shift in how industrial organizations approach human error.

From reacting to failure → to understanding the conditions that make failure likely

When fueled by rich context, it enables organizations to:

- Anticipate human error

- Strengthen system resilience

- Scale expertise across operations

And ultimately:

Create systems that actively support people in making the right decisions—especially when it matters most.

References:

Pavlus, J., 2025. Amazon takes on AI’s biggest nightmare: Hallucinations. Fast Company, 4 December. Available at: Fast Company article