A site can go months without a recordable injury and still have repeated line-of-fire exposures, inconsistent energy isolation practices, mobile equipment near misses, or critical controls that are quietly drifting. The numbers may suggest the system is healthy. The risk may be telling a very different story.

That is why Serious Injury and Fatality, or SIF, reduction must be understood as more than a safety program. It is one of the clearest leading indicators of Operational Integrity. When an organization focuses on SIF potential, it is not only trying to prevent the worst outcomes. It is testing the strength of the operating system itself.

Are critical risks known? Are controls defined? Are those controls understood? Are they being used? Are leaders verifying effectiveness? Are weak signals moving quickly enough to drive action? These questions sit at the intersection of safety performance, operational discipline, and business excellence.

For many years, occupational safety and health performance has been framed largely through the lens of compliance: Are we meeting the regulation? Are we satisfying the requirement? Are we documenting the activity? Compliance is essential. It is the foundation of a responsible safety management system. But compliance should never be mistaken for the ceiling of safety performance.

Compliance is the floor.

If our goal is to prevent serious injuries and fatalities, we must move beyond asking only, “What is required?” and begin asking, “What is possible?” That shift is where SIF reduction becomes the leading edge of Operational Integrity.

Compliance Is the Floor

Every organization must comply with applicable laws, standards, and expectations. That is non-negotiable. But compliance alone does not guarantee that the highest-consequence risks are being effectively controlled.

A company can be compliant and still have significant exposure to uncontrolled energy, line-of-fire hazards, work at height, confined space risks, mobile equipment interactions, chemical exposure, process safety events, or bypassed critical controls. These exposures may not always show up clearly in traditional injury statistics, but they carry the potential for severe or fatal outcomes.

That is why a mature safety culture must move from reactive compliance to proactive risk management. Reactive compliance asks, “Did we meet the requirement?” Proactive risk management asks, “Are we controlling the hazards that can seriously hurt or kill someone?”

That distinction matters. It changes the conversation from activity to outcome. It shifts leadership attention from simply tracking what already happened to understanding what could happen next. It also helps the C-suite see safety performance not as a cost center, but as an essential part of how the business operates with discipline, reliability, and integrity.

The Importance of Better Defining SIFs

One of the most important developments in the safety profession is the effort to better define what we mean by Serious Injury and Fatality prevention. The National Safety Council and its Campbell Institute have helped advance this work by encouraging organizations to move beyond broad injury classifications and focus more specifically on life-threatening, life-altering, and fatal events.

This direction is important because traditional injury rates do not always predict fatality and severe-event potential. Recordable injury rates can decline while severe and fatal risks remain present. A site may appear to be performing well on lagging metrics while still carrying exposures that could result in catastrophic harm.

That is why definition matters. If an organization does not clearly define SIFs and potential SIFs, it may miss the signals that matter most. A minor injury may have SIF potential if the energy, exposure, or failed control could have produced a life-altering outcome. A near miss may be more important than its lack of injury suggests. A bypassed safeguard, an uncontrolled energy exposure, or a dropped object may tell the organization more about fatality potential than a first-aid case.

This is consistent with the direction of the National Safety Council’s SIF Prevention Model, which emphasizes identifying serious and potential serious events, understanding precursors, implementing controls, and verifying that those controls are effective. The value of a clearer SIF definition is that it moves the organization from injury classification to risk understanding.

If we only classify events by what happened, we may underreact to high-potential events. If we classify events by what could reasonably have happened, we create a stronger path to prevention.

Better SIF definitions help leaders ask better questions:

- Was serious energy present?

- Were critical controls missing, weak, bypassed, or misunderstood?

- Could the outcome have been life-altering under slightly different circumstances?

- Does this event reveal a system weakness?

- Could the same exposure exist elsewhere?

This aligns directly with Operational Integrity. Better SIF definitions help the organization focus on the true risk profile of the work, not just the injury outcome. They help the business define the risk, establish the control, verify effectiveness, learn continuously, and improve the system.

In practical terms, better SIF definitions help safety leaders and business leaders separate low-consequence activity from high-consequence potential. They help organizations see past the injury label and focus on the exposure, the energy, the control, and the system condition. That is the foundation of proactive SIF reduction, and it is another reason SIF prevention belongs at the center of Operational Integrity.

Why SIF Reduction Belongs Inside Operational Integrity

Once an organization has a clear definition of SIFs and potential SIFs, the next question is where that work should live. It should not sit off to the side as a separate safety initiative. It belongs inside Operational Integrity.

Operational Integrity is the bridge between safety performance and business performance. At its core, Operational Integrity means the organization operates the way it intends to operate. It means risk is understood, expectations are clear, safeguards are in place, people are competent, work is planned, and leaders verify that critical controls are effective.

SIF reduction belongs inside Operational Integrity because SIF potential often reveals where the operating system is under stress. A serious injury or fatality rarely occurs because of one isolated failure. More often, it reflects a combination of conditions: a weak planning process, a degraded safeguard, incomplete hazard recognition, normalization of deviation, a supervision gap, a contractor interface issue, a change that was not fully understood, or a critical control that existed on paper but not in practice.

These are not just safety issues. They are Operational Integrity issues.

When a lockout/tagout step is skipped, the issue is not only compliance with an energy control procedure. It may also be a question of work planning, training, production pressure, supervision, verification, and leadership expectations. When a line-of-fire exposure becomes routine, the issue is not only whether a hazard was observed. It may also be a question of task design, equipment layout, job sequencing, pre-job planning, and whether employees feel empowered to stop and correct the condition.

When a mobile equipment near miss repeats across several areas, the issue is not only operator behavior. It may also be a question of traffic management, pedestrian controls, visibility, maintenance, contractor coordination, and site leadership. That is why SIF reduction is so powerful. It forces the organization to look beneath the event and examine the operating conditions that allowed serious risk to exist.

Operational Integrity asks whether the system is working. SIF reduction shows where the system may fail with the greatest consequence. The two are inseparable.

A strong SIF program helps an organization test the health of its Operational Integrity system in several important ways:

- It clarifies which risks matter most.

- It defines the controls that must not fail.

- It strengthens operating discipline.

- It improves learning from weak signals.

- It connects safety to business performance.

This is why SIF reduction is not a side initiative. It is a leading component of how a business knows whether it is operating with integrity. When Operational Integrity improves, safety improves. But so do reliability, quality, productivity, trust, and business resilience. The organization becomes better at identifying risk, making decisions, allocating resources, and learning before failure occurs.

Operational Integrity drives Business Excellence, and SIF reduction gives Operational Integrity a sharp focus on the risks that can cause the greatest harm.

From SIF Reduction to Business Excellence

Business Excellence is not achieved by safety performance alone. It is achieved when the organization consistently delivers results through disciplined, reliable, and responsible operations. SIF reduction contributes directly to that outcome.

When leaders focus on SIF potential, they are focusing on the parts of the business where failure carries the highest consequence. They are asking whether the organization understands its most serious risks, whether critical controls are reliable, whether people are engaged in identifying weak signals, and whether the business can act before an event occurs.

That is business discipline.

A strong SIF program improves more than the safety scorecard. It improves how the business sees risk. It improves how leaders prioritize resources. It improves how operations, maintenance, engineering, contractors, and safety professionals work together. It improves how learning moves across the organization.

Most importantly, it helps prevent the events that can permanently harm people, disrupt operations, damage trust, and threaten the organization’s license to operate. SIF reduction is not only about preventing loss. It is about building a more resilient and higher-performing organization.

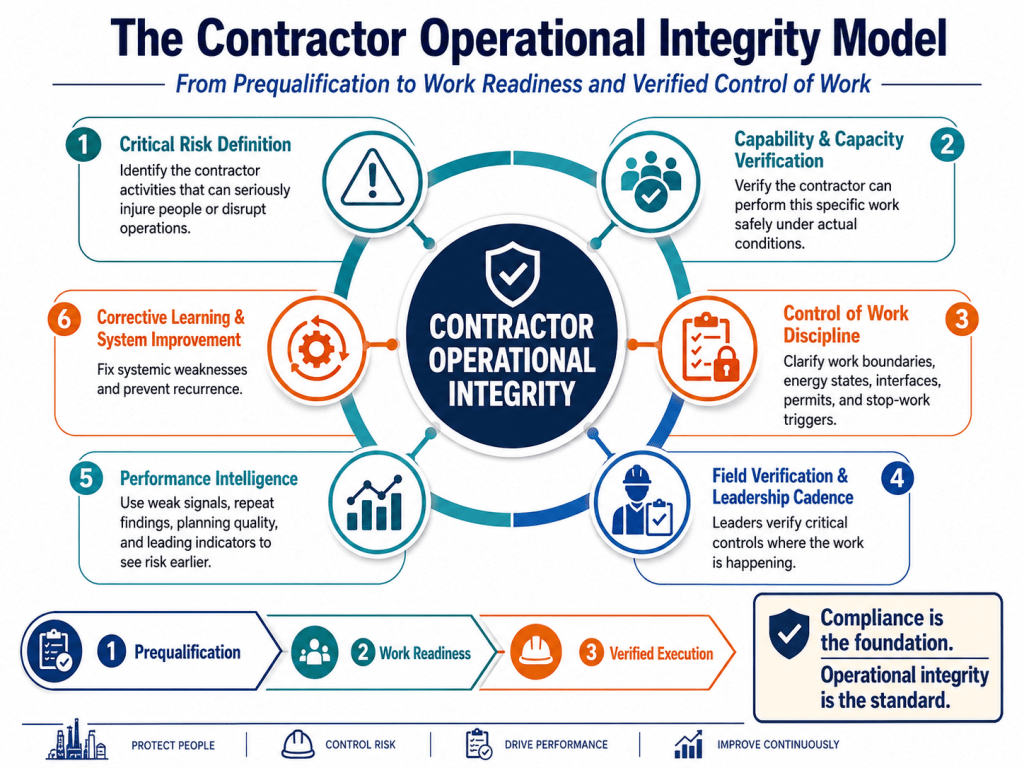

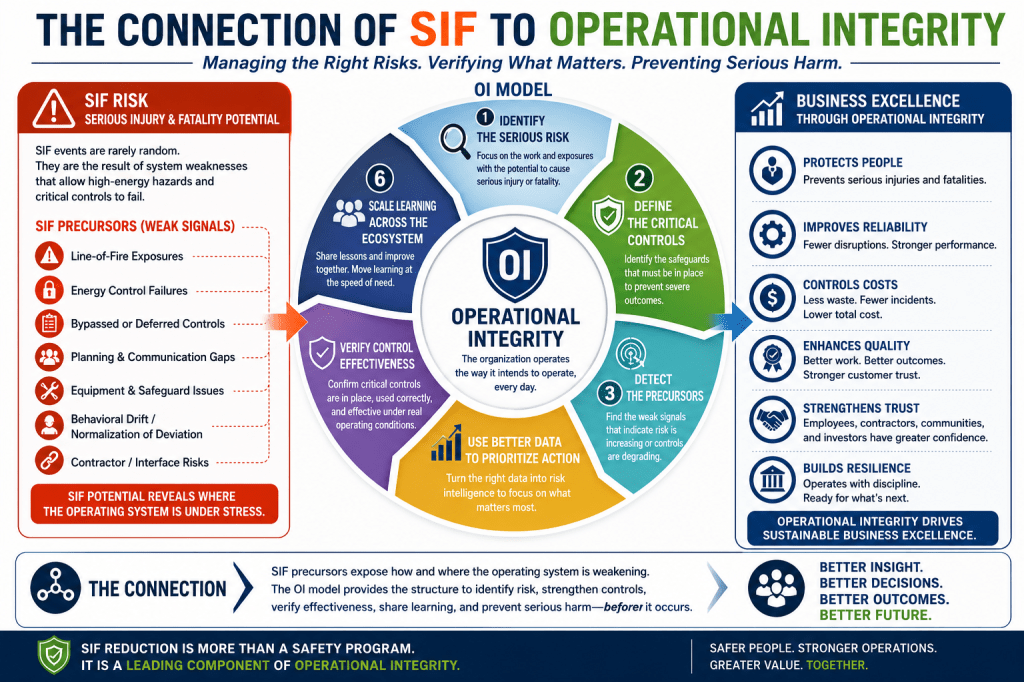

The SIF-to-Operational Integrity Model

For SIF reduction to create value, it must be practical. The model does not need to be complicated. In fact, simplicity is a strength. A useful SIF-to-Operational Integrity model can be built around six steps:

- Identify the serious risk.

- Define the critical controls.

- Detect the precursors.

- Use better data to prioritize action.

- Verify control effectiveness.

- Scale learning across the ecosystem.

The first step is to identify the work and exposures that have the potential to cause serious injury or fatality. This may include uncontrolled energy, line of fire, work at height, confined space entry, mobile equipment interaction, process safety exposure, chemical exposure, lifting operations, or other high-consequence activities specific to the organization. The goal is not to create a long list of every possible hazard. The goal is to focus on the risks that matter most.

Once serious risks are identified, the next step is to define the controls that must be in place to prevent severe outcomes. These may include engineering controls, isolation procedures, permits, physical barriers, interlocks, exclusion zones, equipment safeguards, competency requirements, supervision, planning processes, or stop-work expectations. The key is to distinguish between general safety activity and controls that are truly critical to preventing serious harm.

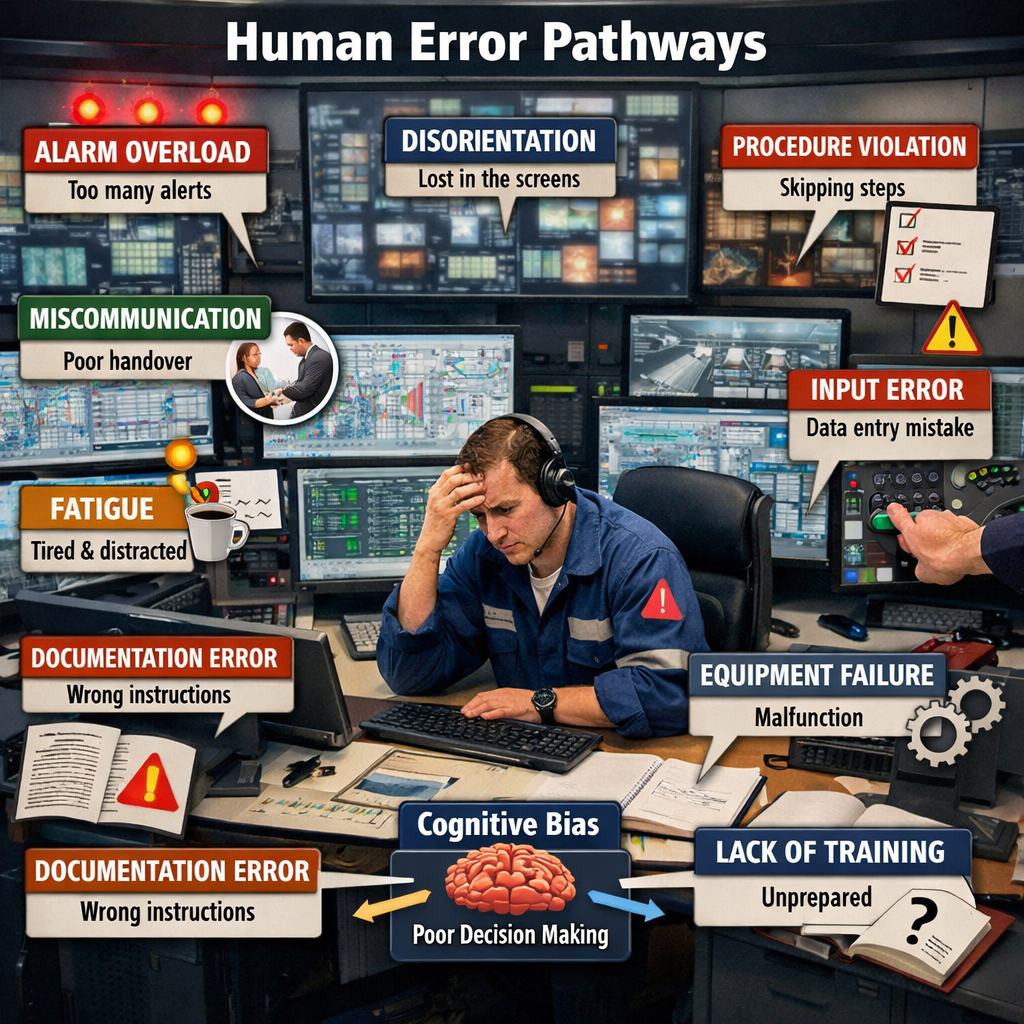

The third step is to detect the precursors. SIF precursors are the weak signals that tell us serious risk may be increasing. These may include near misses, repeated deviations, degraded safeguards, bypassed controls, incomplete permits, inadequate pre-job planning, recurring observations, maintenance deferrals, contractor interface issues, or employee concerns. A mature organization treats these signals as valuable intelligence, not administrative noise.

The fourth step is to use better data to prioritize action. More data does not automatically create better decisions. The real question is whether data improves risk intelligence. Better data helps leaders understand where serious exposures exist, whether critical controls are understood, whether those controls are being used, where weak signals are emerging, and where intervention is needed before someone gets hurt.

The fifth step is to verify control effectiveness. A control is only valuable if it works when needed. Operational Integrity depends on verification. Leaders must know whether critical controls exist, whether they are being used correctly, and whether they remain effective under real operating conditions. Verification moves the organization beyond assumption. It changes the conversation from “we have a procedure” to “we know the control is working.”

The final step is to scale learning across the ecosystem. No single group sees the whole risk picture alone. Safety, operations, maintenance, engineering, contractors, suppliers, technology partners, frontline employees, supervisors, and executives all see different signals. When those signals remain disconnected, the organization may miss important patterns. When they are connected around a shared SIF reduction strategy, the organization gains a fuller and more useful view of risk.

The goal is to move learning at the speed of need. When a serious precursor is identified in one part of the organization, the lesson should travel quickly to every part of the organization where similar risk exists. That is how SIF reduction becomes scalable. That is how Operational Integrity becomes stronger.

Better Data, Not More Data

The modern safety profession is surrounded by data. Observations, inspections, incident reports, audits, corrective actions, training records, claims, near misses, sensor outputs, contractor performance data, and operational metrics all contribute to the safety information ecosystem. But more data does not automatically mean better decisions.

A site may generate thousands of observations and still miss the few signals that matter most. A dashboard may be full of activity while the organization remains unclear about whether critical controls are actually effective. Better data separates noise from signal. It helps the organization see where serious risk is changing, where controls are weakening, where patterns are emerging, and where leadership attention is needed.

This is especially important because traditional lagging metrics often do not provide enough insight into severe-event potential. A site may have a low recordable injury rate and still carry significant SIF exposure. The objective is not to admire the data. The objective is to act before harm occurs.

Keep It Simple

SIF reduction can feel complex on the ground. The terminology, classification methods, data systems, and analytical approaches can become overwhelming if they are not designed with the user in mind. That is why the KISS model matters: Keep It Simple.

A practical SIF approach should be simple enough for frontline employees to understand, supervisors to use, leaders to support, and executives to act on. The core questions do not have to be complicated:

- What serious risks exist in our work?

- What precursors tell us those risks may be increasing?

- What controls must be in place?

- How do we know those controls are working?

- What action is needed now?

The simpler the model, the more likely it is to be used consistently. Complexity may impress a conference room, but simplicity moves an organization. For SIF reduction to succeed, it must be easy to report concerns, easy to recognize high-risk conditions, easy to escalate weak signals, and easy for leaders to see where action is needed.

Technology Should Reduce Friction

Digital technology has changed what is possible in safety and health. Today’s tools can help democratize reporting, accelerate learning, connect information across sites, and surface patterns that would be difficult to detect manually.

When technology is designed well, it allows the people closest to the work to report hazards, concerns, near misses, and weak signals quickly. It gives leaders faster visibility to risk. It helps organizations understand where serious exposures are occurring and where critical controls may be weak.

But technology should reduce friction, not add complexity. If a digital tool creates more paperwork, more administrative burden, or more disconnected data, then it is not advancing the outcome. Safety technology should not be measured by how much information it collects. It should be measured by whether it helps the organization see risk earlier, act faster, and verify that controls are working.

Technology is not the control. The control is the safeguard, the procedure, the competency, the supervision, the planning, the maintenance, or the leadership action that reduces risk. Technology helps us see whether those controls are understood, used, effective, and improving.

That distinction is essential. SIF reduction is not about digitizing bureaucracy. It is about improving risk intelligence and accelerating prevention.

Ecosystem Partnerships Strengthen Risk Intelligence

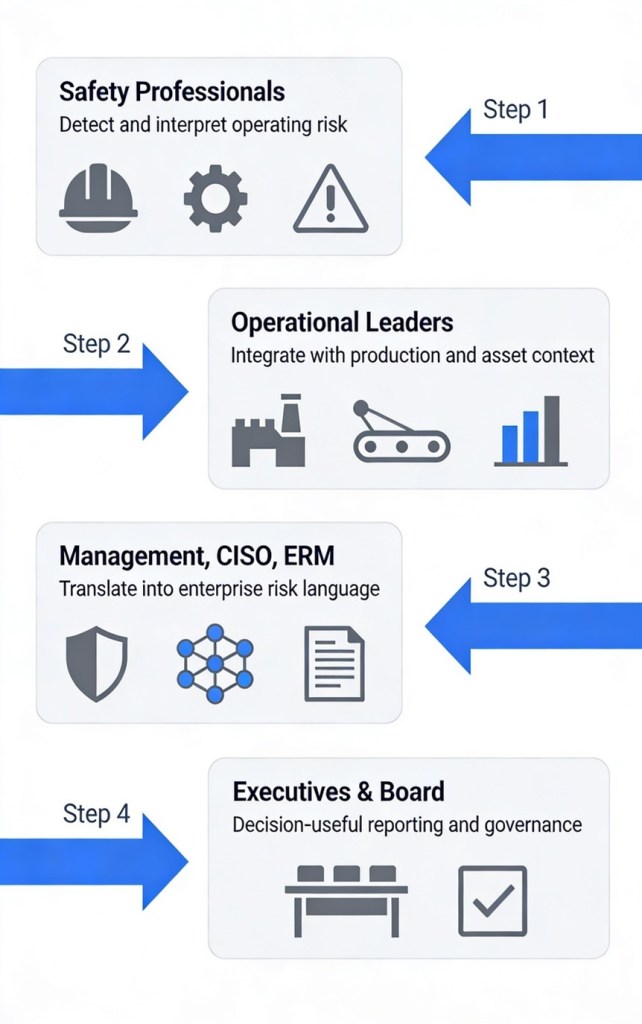

No single group sees the whole risk picture alone. The safety function sees one part. Operations sees another. Maintenance, engineering, contractors, suppliers, technology partners, frontline employees, supervisors, and executives all see different signals.

When those signals remain disconnected, the organization may miss important patterns. But when ecosystem partners are connected around a shared SIF reduction strategy, the organization gains a fuller and more useful view of risk.

A contractor may identify a recurring field condition. A frontline employee may report a concern about a task. A supervisor may notice a planning weakness. A technology partner may help reveal a trend across locations. A safety professional may connect those signals to a critical control gap. A business leader may remove a barrier to action.

That is the power of ecosystem thinking. The goal is not to create more channels of information. The goal is to create better insight and faster learning. Strong ecosystem partnerships help organizations move from isolated observations to shared risk intelligence. That is how safety systems become more predictive, more practical, and more effective.

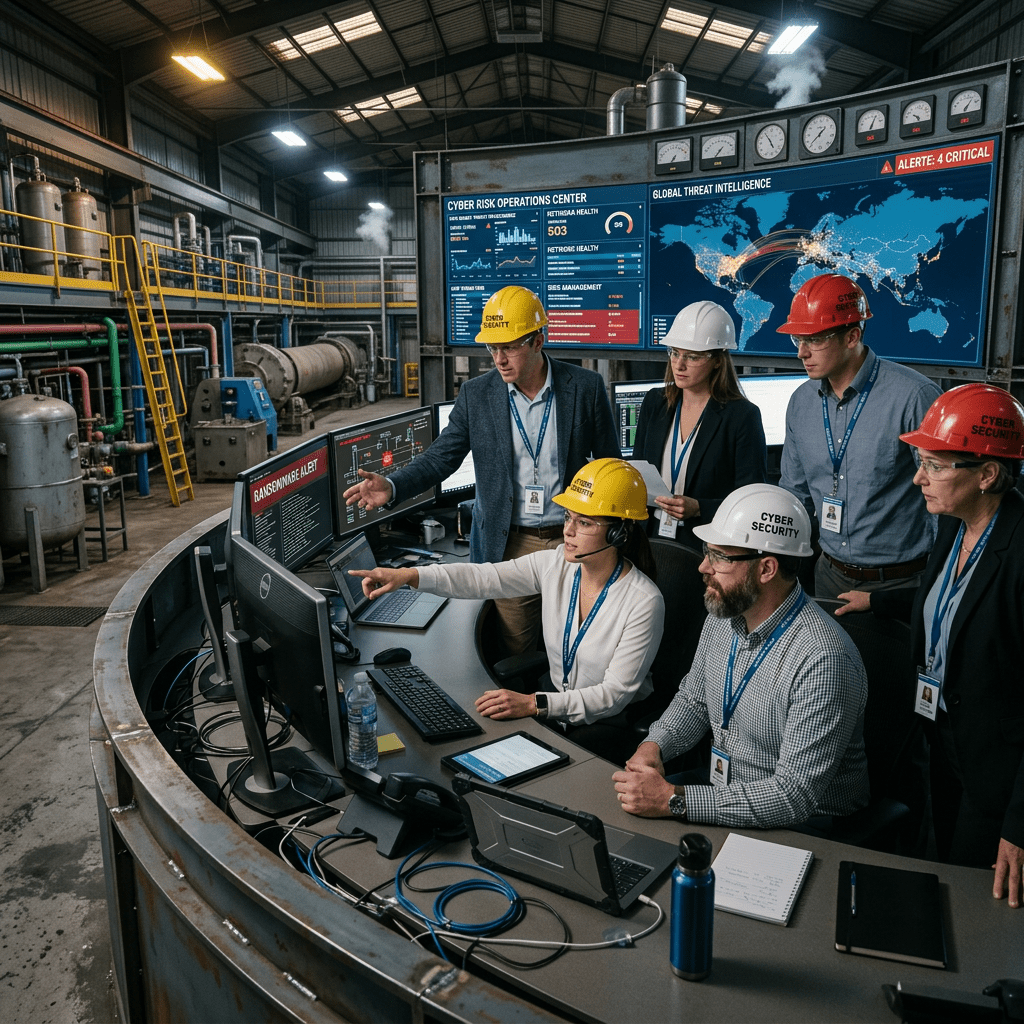

Human + AI: Working Ahead of Harm

The next frontier in SIF reduction is the collaboration of humans and artificial intelligence. AI can help organize information, detect patterns, identify weak signals, and surface insights at a speed and scale that traditional processes cannot match. But AI does not replace the judgment, ethics, experience, and leadership that safety professionals bring to the work.

AI can help find the signal. People turn that signal into action.

Organizations should not start with AI as the project. They should start with the risk. Choose one serious exposure that matters: uncontrolled energy, line of fire, work at height, mobile equipment interaction, confined space entry, chemical exposure, process safety risk, or another high-consequence activity. Then ask how people, data, technology, and AI can help identify precursors, verify controls, and act before harm occurs.

The practical starting point is simple:

- Pick one serious risk.

- Identify the key precursors.

- Use better data to understand where the risk is showing up.

- Verify whether the critical controls are working.

- Take action before someone gets hurt.

- Measure improvement.

- Scale what works.

This is Human + AI collaboration in service of prevention. It is not technology for technology’s sake. It is technology enabling people to work ahead of harm.

From Safety Activity to Risk Reduction

One of the challenges in safety leadership is that organizations can become very busy with activity: inspections completed, audits conducted, observations submitted, training delivered, actions closed. Those activities matter, but they are not the final measure of success.

The deeper question is whether the work is reducing serious risk.

A strong SIF program helps answer that question. It directs attention to the exposures with the highest consequence. It helps leaders prioritize limited resources. It strengthens operational discipline. It connects field-level insight with executive decision-making. It creates a line of sight between safety action and business performance.

That is why SIF reduction is such a powerful leading component of Operational Integrity. It helps the organization move from counting events to controlling risk. It helps shift leadership from reacting to learning. It helps safety professionals demonstrate measurable value. And it helps the business protect its most important asset: its people.

What Safety Leaders Can Do Now

For leaders who want to move from compliance to possibility, the starting point does not have to be complicated. Start with one serious risk. Bring together the people who understand the work. Define the critical controls. Look for the precursors. Use technology to improve visibility. Use data to guide action. Use leadership to remove barriers. Use the ecosystem to learn faster. Then measure whether risk is actually being reduced.

That is the practical path forward. It is simple, but it is not small. Done consistently, it can change how an organization understands and manages serious risk.

Conclusion: The Future Is Proactive

The future of safety leadership will not be defined by organizations that collect the most data or create the most complicated programs. It will be defined by organizations that use better data, simpler systems, stronger partnerships, and disciplined leadership to prevent the events that matter most.

SIF reduction gives us a focused way to do that. It helps us move beyond compliance and into Operational Integrity. It helps us connect safety to Business Excellence. It helps us use technology without losing sight of people. It helps us turn weak signals into action. Most importantly, it helps us protect people before serious harm occurs.

Compliance is the floor. Operational Integrity is the operating system. SIF reduction is the leading edge of prevention.

The organizations that lead the next era of safety will not be the ones with the most data. They will be the ones that turn the right data into action before serious harm occurs.

And Human + AI collaboration gives us the power to work ahead in keeping people safe.

References

Brandon, C. (2026, May 11). Operational Integrity: The Operating Model Heavy Manufacturing Needs. LeadingEHS.com.

https://leadingehs.com/2026/05/11/operational-integrity-the-operating-model-heavy-manufacturing-needs/

National Safety Council. (n.d.). Serious Incident and Fatality Prevention Model. National Safety Council.

https://www.nsc.org/workplace/sif-prevention-model

National Safety Council. (n.d.). Serious Incident and Fatality (SIF) Prevention Model: Guidebook. National Safety Council.

https://www.nsc.org/getmedia/abdc94f2-651b-4843-919c-81e1629755fe/sif-guidebook.pdf

National Safety Council. (n.d.). SIF Prevention Model Definitions and Key Terms. National Safety Council.

https://www.nsc.org/getmedia/f4c01a6a-cc80-4f19-b64d-11d95142c840/sif-model-key-terms-definitions.pdf